It is a common practice by new users to ignore this FAQ and simply try to run jobs without understanding what they are doing. If you have never used a high performance computing cluster, or are not familiar with SeaWulf, YOU WILL WANT to read and follow the instructions in the FAQ below (start with the Getting Started Guide!).It has been operated at KIT since Januand enables users to synchronize or exchange their data between different computers, mobile devices, and users. The state service bwSync&Share is an online storage service for employees and students of universities and colleges in Baden-Württemberg. Online storage for sharing data (bwSync&Share) The service enables a qualified implementation of the recommendations of the German Research Foundation (DFG) on good scientific practice (recommendation 7 on the securing and storage of research data). Data archiving is carried out at KIT and includes reliable storage of even large data sets for a period of ten years or more. The state service bwDataArchive offers a technical infrastructure for long-term archiving of scientific data.īwDataArchive is available especially for members of universities and public research institutions in Baden-Württemberg. More information: Mass storage for scientific data (LSDF Online Storage). The service is not suitable for storing personal data. The backup and protection of the data is carried out according to the current state of the art. Access is guaranteed via standard protocols. The LSDF Online Storage is operated by the Steinbuch Centre for Computing. The service "LSDF Online Storage" provides users of KIT with access to a data storage, which is especially designed for the storage of scientific measurement data and simulation results of data intensive scientific disciplines. Mass storage for scientific data (LSDF Online Storage) More information about this topic can be found here and on the corresponding documentation of the HPC systems.

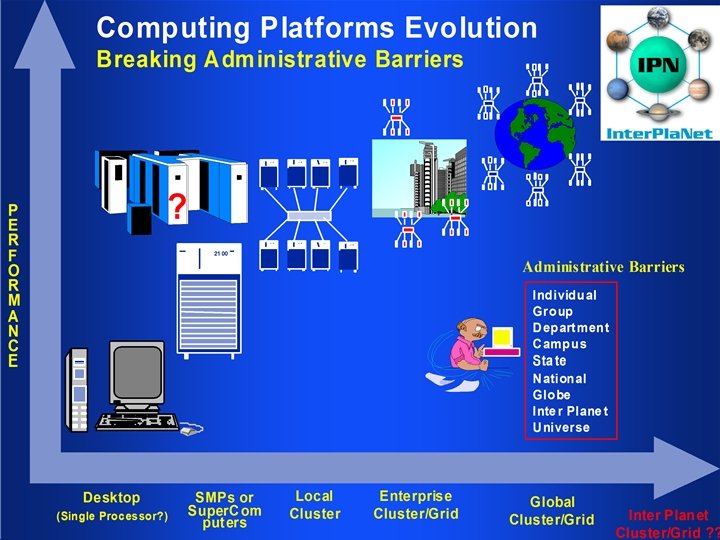

To meet these demands, the SCC provides the functionality of an on-demand file system on current and future HPC systems, which will be created exclusively for an HPC job and will only be available on the allocated compute nodes during simulation. Slides and presentations on productive Lustre installations on the page Lustre.įuture applications will place higher demands on the storage subsystem of HPC systems.User Guides of the various HPC systems.

At present there are a total of 10 Lustre file systems with a storage capacity of 9573 TiB, 61 servers and 2600 clients on these HPC systems.

Since the beginning of 2005, Lustre has been used as the data system for the clusters at the SCC, which is currently used by the research high performance computer HoreKa and the state computer bwUniCluster. These are characterized by a very high throughput performance and very good scalability. So-called parallel file systems are directly connected to the clusters. The SCC operates storage systems for different purposes: The page " Teaching, Training and Further Education provides information about offers in the field of teaching.Ĭost of this service will be calculated according to the budgeting rules applied to your organization. In addition to supporting research and development with hardware, software and many years of know-how in these two areas, teaching in the HPC field and its environment is of equal importance. For more information on SimLabs, please visit the Scientific Computing and Simulation Department. Research-related and research-accompanying support is provided by SimLabs (Simulation Laboratories), which currently cover four research areas: Earth (climate) and Environment, NanoMicro, Energy and Astroparticle Physics. as well as for open source codes and numerical libraries. In addition, support and consulting is provided for components of software development such as compilers, debuggers, analysis tools, MPI, etc. An essential task for the SCC is to support the users with their technological and scientific applicationswhich do not only have to be purely within the HPC area.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed